Marguerite.jl v0.1: A Differentiable Frank-Wolfe Solver

I'm releasing Marguerite.jl, a differentiable Frank-Wolfe (conditional gradient) solver for convex and bilevel optimization in Julia. The package is named in honor of Marguerite Frank (1927–2024), co-inventor of the Frank-Wolfe algorithm (1956).

Marguerite.jl finds parameterized solutions to optimization problems of the form

where is a compact convex set parameterized by , which is accessed via a linear minimization oracle (LMO). In particular, the LMO returns solutions to approximate program

This "projection-free" paradigm is useful in instances where it is undesirable to project onto the constraint set. The ideal case is where there is a directly computable solution for the linear subproblem at each iteration. It's even better if it's cheap to evaluate.

Why Marguerite.jl?

Marguerite.jl is built for simple and fast bilevel optimization, meaning optimization programs that appear as

It achieves this by implementing highly optimized implicit differentiation for a restrictive–-but expressive–-selection of constraints. It then abstracts that away into a simple, minimalist interface.

The key features:

Differentiable solve: Implicit differentiation via

ChainRulesCore.rrulegives exact gradients at convergence, without unrolling the solver iterations. The Hessian system is solved by conjugate gradient with Hessian-vector products, so memory is .Bilevel optimization:

bilevel_solvebackpropagates through the solver to learn parameters of constrained inner problems. This handles objectives and constraints that depend on the outer parameters .Zero-allocation inner loop: Pre-allocated buffers and

@inboundshot paths. The solver itself allocates nothing in the main loop.Minimal API: Everything goes through

solve(f, lmo, x0; ...). Provide a manual gradient withgrad=, or let ForwardDiff handle it automatically.

Quick example

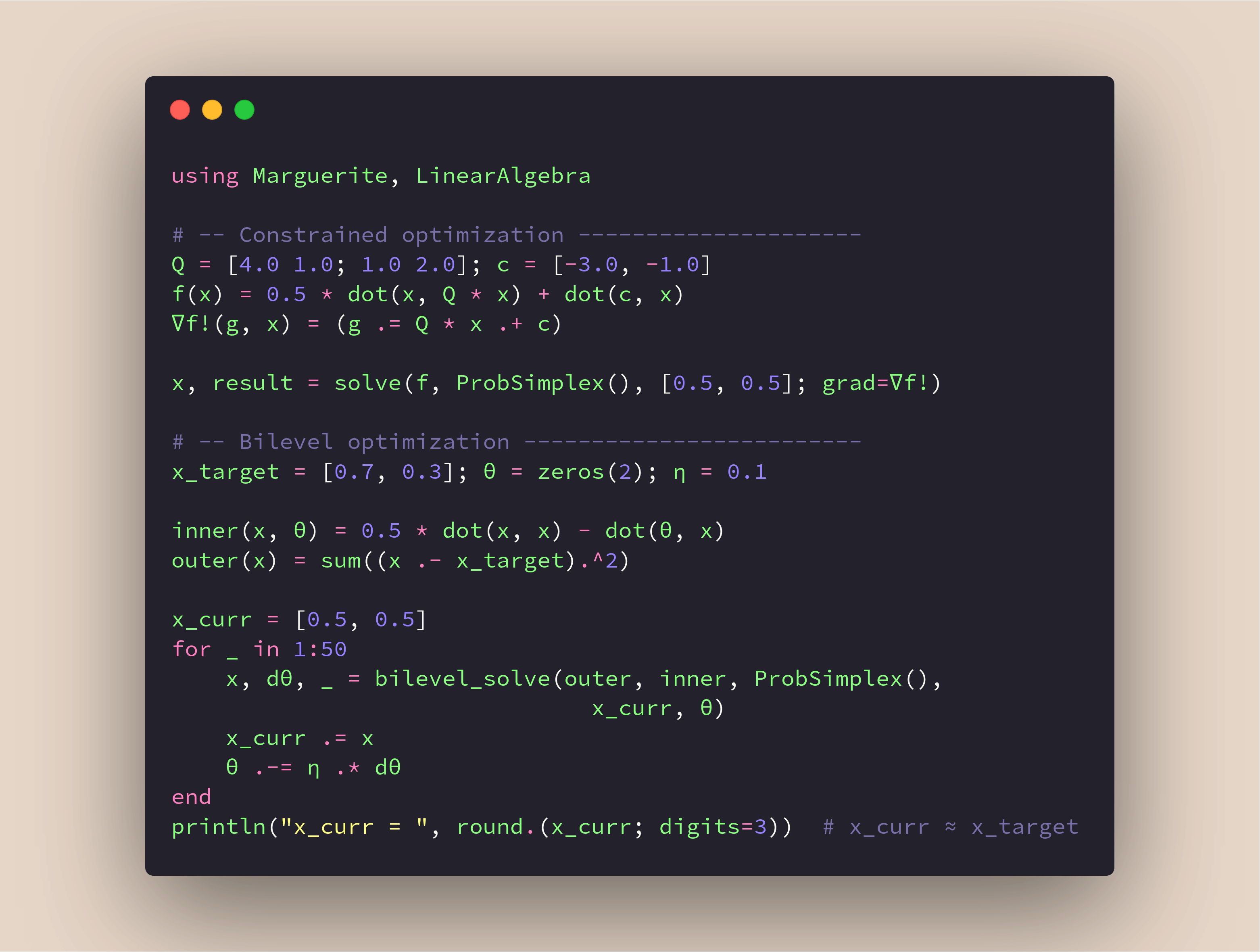

using Marguerite, LinearAlgebra

# Minimize a quadratic on the probability simplex

Q = [4.0 1.0; 1.0 2.0]; c = [-3.0, -1.0]

f(x) = 0.5 * dot(x, Q * x) + dot(c, x)

x, result = solve(f, ProbSimplex(), [0.5, 0.5])Bilevel optimization

Many operations and planning problems have nested structure: An outer loss wraps a constrained inner problem. Marguerite.jl differentiates through the inner solve via implicit differentiation:

x_target = [0.7, 0.3]; θ = zeros(2); η = 0.1

inner(x, θ) = 0.5 * dot(x, x) - dot(θ, x)

outer(x) = sum((x .- x_target).^2)

x_curr = [0.5, 0.5]

for _ in 1:50

x, dθ, _ = bilevel_solve(outer, inner, ProbSimplex(),

x_curr, θ)

x_curr .= x

θ .-= η .* dθ

end

# x_curr ≈ [0.7, 0.3]Connection to randomized switching

Marguerite.jl grew out of my work on network reconfiguration with Dmitrii Ostrovskii and Daniel Molzahn. In that work, we optimize over the space of switching probabilities (try it live!). The structure of this problem makes Frank-Wolfe particularly effective. The graph problems example in the docs demonstrates this connection: minimizing the Laplacian pseudoinverse quadratic form over a masked knapsack polytope.

Install

Requires Julia 1.12+:

using Pkg

Pkg.add(url="https://github.com/samtalki/Marguerite.jl")Your feedback is welcome – please open issues on GitHub.